As much as I love Wave Analytics, it is not very fun to deploy from Sandbox (at this time). There are many different things to keep in mind when moving Wave from one environment to another (even if it is just Sandbox to Sandbox). Knowing these shortcomings ahead of your pending deployment will save you a big headache, as you can plan accordingly. There is a good bit to discuss, so let’s jump right in!

Apps

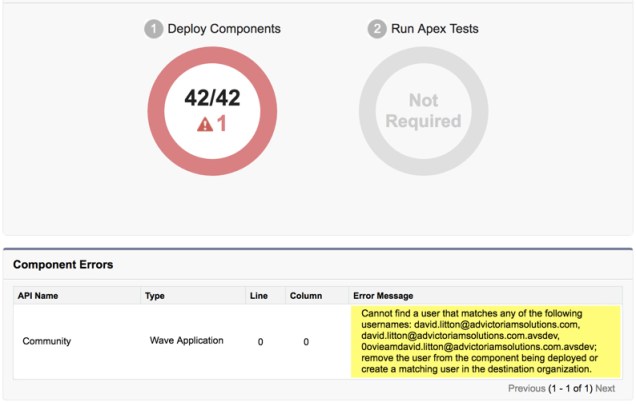

These are nice and easy to deploy, but you have to be careful of the username naming convention you’re using, if you have any specific sharing to Users on your App. This can be a problem if you’re a consultant and you’re setup in a Sandbox separately from the Production environment, and possibly the naming convention for your Username was switched up. Or, it could be from you assigning the App to a specific Community User and that Community User was only created in your Source Org and not your Target Org. Either scenario will cause an error.

Dataflow

This is something that you need to be careful with. The Dataflow that you build in your Source Org will overwrite the existing Dataflow in your Target Org. This means, if you have anything in your Dataflow (if it already exists) in the Target Org, you need to make sure you still have it in the Source Org’s Dataflow, or it will be deleted.

The key with Dataflows is to immediately run them to get all of your Datasets populated as quickly as possible. Make sure you’ve got all the fields in your Target Org that the Dataflow references, and that the Analytics Cloud Integration User has FLS. You can deploy a Dataflow without meeting those requirements, and it will error when you run it if you forget.

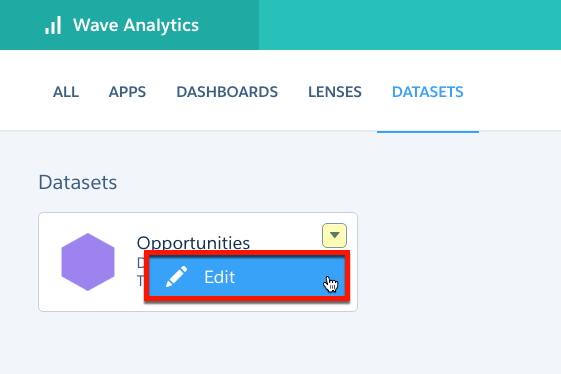

Datasets

I’m going to go out and just say that you shouldn’t deploy any of your Datasets. Let your Dataflow create them upon running the first time. You run the risk of having the Dataset Name adjusted if you do anything out of sequence. And, this can cause additional issues when you’re deploying complex Dashboards (as I’ll touch on in more detail shortly).

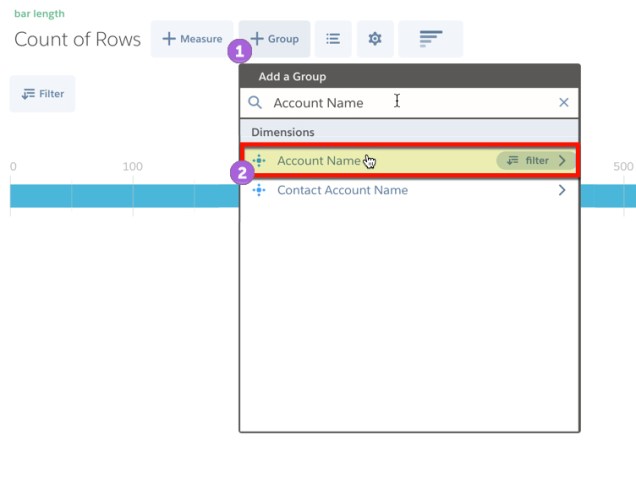

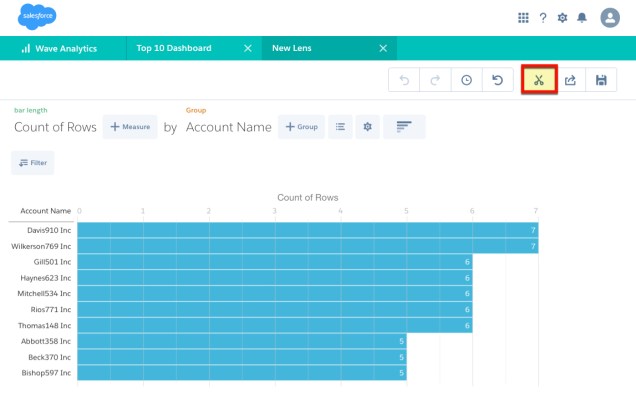

Dashboards & Lenses

Dashboards and Lenses are annoyingly close to complete. These will show you an error when you first open them, because the datasets they’re targeting are empty.

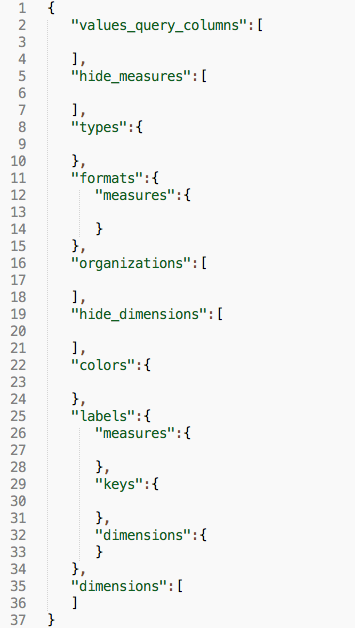

What you have to do is make sure your Dataflow has successfully populated the new Datasets, and then go into the JSON of your Dashboard or Lens and make the adjustments. Note on the above error, it will make you press Continue for every Dataset in your Dashboard… so don’t think it’s broke if you have 10 Datasets, you just have to click 10 times.

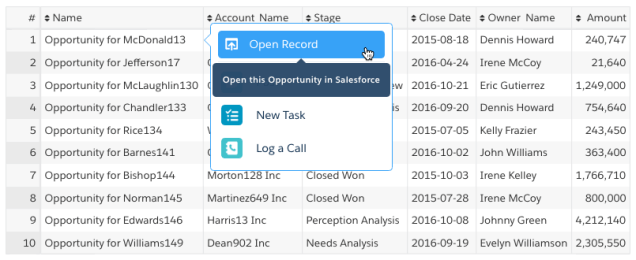

The first one, you’ve heard it a million times… don’t hard-code IDs in Salesforce!! Well, in Wave, you’ve got no other choice. When you deploy a new Dataset, your Target Org will have a new Dataset ID. Your Dashboards and Lenses are going to be still referencing the older Dataset ID, and you need to go in and do a “Find and Replace” for the Dataset ID. This can be pretty easy if you’ve got a simple Dashboard with one dataset, but once you get into double-digit, you run into potentially some of the other areas of trouble…

The Dataset Name also is used inside the Connector aka dataSourceLinks (how you link Datasets together so they dynamically filter) and in any PIGQL. So, if you deployed a Dataset incorrectly and the name changes, you’re going to have to also update the Name in these spots similar to how you did with the ID.

Recipes

Recipes became GA in Spring ’17. There extremely powerful and are getting even stronger with the Summer ’17 release. In the Summer ’17 release they’re becoming accessible through the REST API. What they allow you to do is to filter and transform an existing dataset extremely easily. You can do joins to other datasets, bucketing of fields, adding of filters, and more. The issue here is, they don’t live anywhere in the metadata (at this time). So, whatever you create in a recipe, you’re going to have to manually re-create in your Target Org. At the rate they’re improving all aspects of Wave, I am hopeful this becomes deployable with the Winter ’18 release.

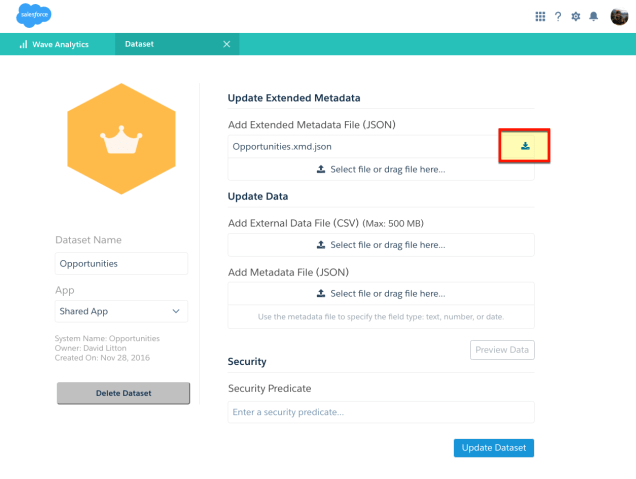

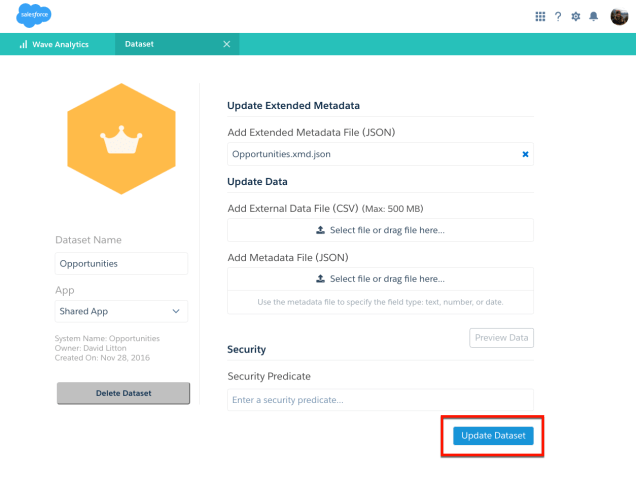

Security Predicates

Unfortunately, Security Predicates don’t live anywhere (at this time) to where you can successfully deploy them. Luckily these are typically straightforward, meaning that it’s typically a quick copy & paste to get your Security Predicate moved into the new environment. When you’re adding a Security Predicate into your new environment, you need to make sure you meet these basic requirements:

- Analytics Security User has READ Access to all referenced Fields

- The Running User (you) has READ Access to all referenced Fields

In short, make sure you correctly deployed the FLS for the Fields that you had in your previous environment, or you’ll be running around in circles.

RECAP

Sometimes I wonder why I bother to deploy this at all. It would be (at the time of this post) almost just as much effort to simply copy & paste the work over to the new environment. Be careful of the known shortcomings and plan accordingly. Because of these issues, depending on the size of your deployment, you need to be aware of the additional time it will take to deploy.